Introduction

In the realm of software development, the complexity of applications has steadily increased over time. To tackle this challenge, software architects have continuously evolved their approaches to designing and structuring backend systems.

In this article, we will delve into the evolution of backend software architecture, focusing on key paradigms such as N-Layered, Domain-Driven Design (DDD), Hexagon (Ports and Adapters), Onion, and Clean Architecture. Understanding these architectural styles and their distinctions can greatly benefit developers and architects in building robust and maintainable systems.

Where it all began

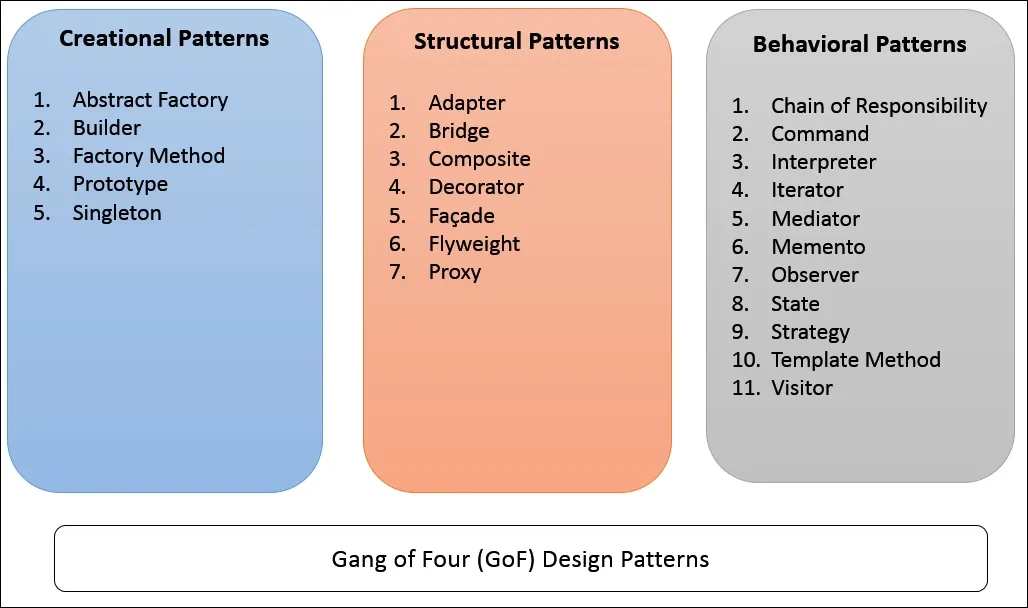

In the good old days, architecture was just a distant dream. Back then, calling yourself an architect meant knowing the ins and outs of the Gang of Four (GoF) patterns. Ah, those blissful times when things were simpler!

But as computers grew more powerful and user demands soared, application complexity skyrocketed. It was clear that a change was needed.

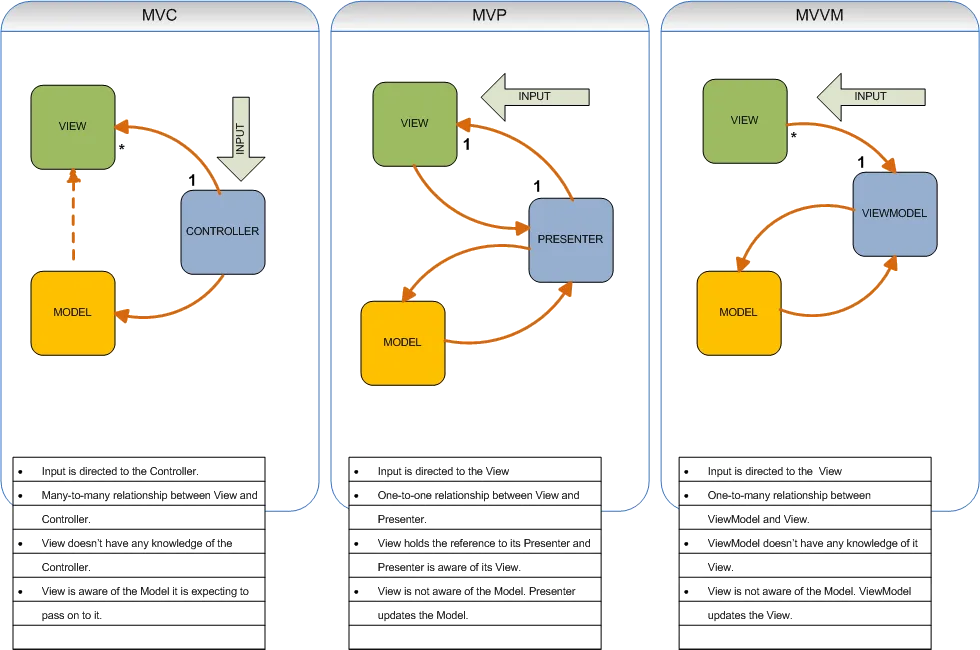

The birth of MVC-like patterns

The first step towards taming the complexity was the separation of UI and business logic. Various MVC-like patterns sprouted depending on the UI framework you were using. If you’re a C# enthusiast like me, you might be familiar with Microsoft’s ASP MVC framework.

Now, let’s play a little guessing game. Among the three components in these patterns (View, Controller, and Model), which one do you think caused the most headaches? Surprisingly, it was the Model — the heart of the application because the Model they referred to wasn’t just DTOs; it represented the Domain Model — the essence of any application.

The Model component posed the most significant challenge in software development. As applications grew in complexity, the Model became a breeding ground for intricate business rules and complex data manipulation. Developers realized that simply applying design patterns, such as those from the Gang of Four (GoF), was insufficient to handle the increasing demands of the Model.

N-Layered Architecture (2002)

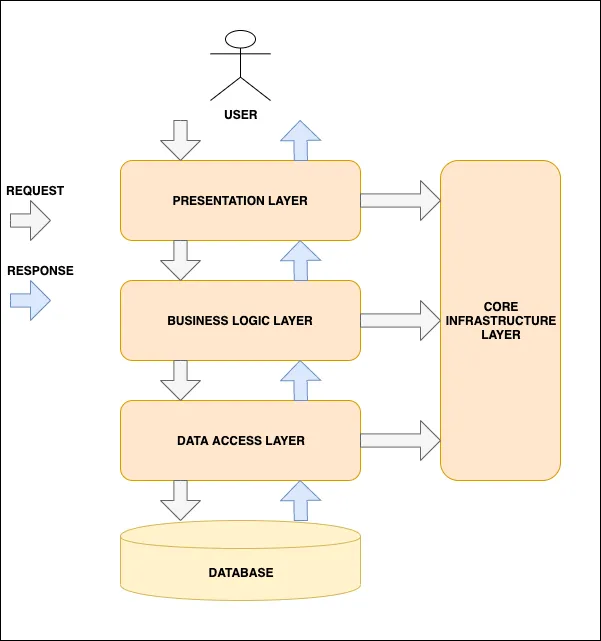

N-Layered architecture, popularized by Martin Fowler, emerged as a solution to manage the growing complexity of applications.

N-Layered architecture is a software design approach that helps organize the code of an application into distinct layers, each with a specific responsibility. Think of it as a way to separate different parts of a building into separate floors or layers, with each floor serving a specific purpose.

In N-Layered architecture, the code is typically divided into three main layers:

- User Interface (UI) Layer: This is the layer that handles the interaction between the user and the application. It deals with the visual elements, user input, and presenting information to the user in a user-friendly way.

- Business Logic Layer (BLL): The BLL layer is where the core logic of the application resides. It contains the rules and processes that define how the application works. This layer implements the business logic, such as calculations, validations, and workflows.

- Data Access Layer (DAL): The DAL layer is responsible for managing data storage and retrieval. It interacts with databases or other data sources to read and write data. It abstracts the details of data storage and provides a consistent way for the application to work with data.

Each layer has its own set of responsibilities, and they interact with each other in a hierarchical manner. The UI layer communicates with the BLL layer to retrieve or update data, and the BLL layer, in turn, interacts with the DAL layer to access or modify the data in the underlying data storage.

The benefit of N-Layered architecture is that it promotes modularity, separation of concerns, and maintainability. By dividing the code into distinct layers, developers can focus on specific functionalities without worrying about the details of other layers. It also allows for easier testing, as each layer can be tested independently.

Domain-Driven Design (DDD)

As applications continued to evolve, it became evident that N-Layered architecture alone was not enough to address the complexities inherent in the Model component. This realization led to the advent of Domain-Driven Design (DDD) by Eric Evans in 2003.

DDD introduced a paradigm shift in software architecture by placing a stronger emphasis on the Domain Model — the heart of the application. Evans advocated for a rich and expressive Domain Model that captured the core business concepts and rules. He encouraged developers to align their software design with the business domain, creating a ubiquitous language that bridged the gap between technical implementation and business requirements.

With DDD, the architecture extended beyond the traditional UI, BLL, and DAL layers. It introduced additional layers, such as the Presentation Layer, Application Layer, and Infrastructure Layer. The Presentation Layer handled user interaction, the Application Layer coordinated use cases, the Domain Layer encapsulated the business logic, and the Infrastructure Layer dealt with external dependencies. This architecture empowered developers to focus on the domain-specific aspects of the application while ensuring a separation of concerns across different layers.

The evolution from N-Layered architecture to DDD highlighted the importance of the Model component and its impact on the overall architecture. By embracing DDD, developers gained a deeper understanding of the domain and were able to design systems that better aligned with business requirements.

However, the journey didn’t end there. Software architects and practitioners continued to refine and enhance these architectural paradigms, leading to the emergence of Hexagon, Onion, and Clean Architecture, each building upon the lessons learned from previous approaches.

Hexagon (Ports and Adapters) Architecture (2005)

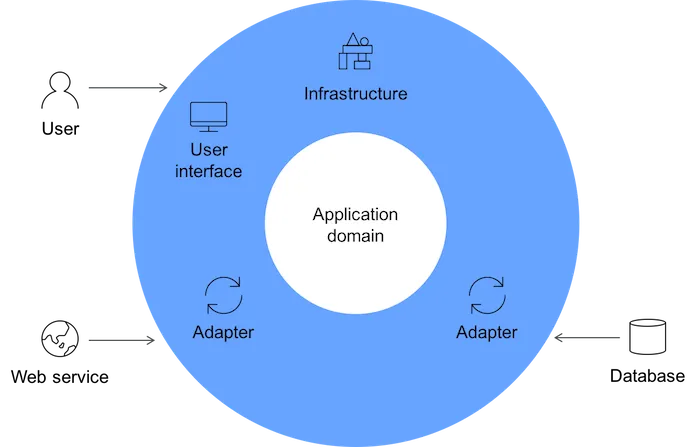

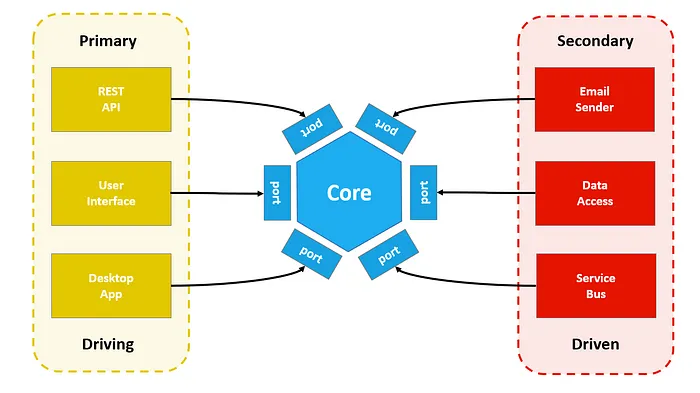

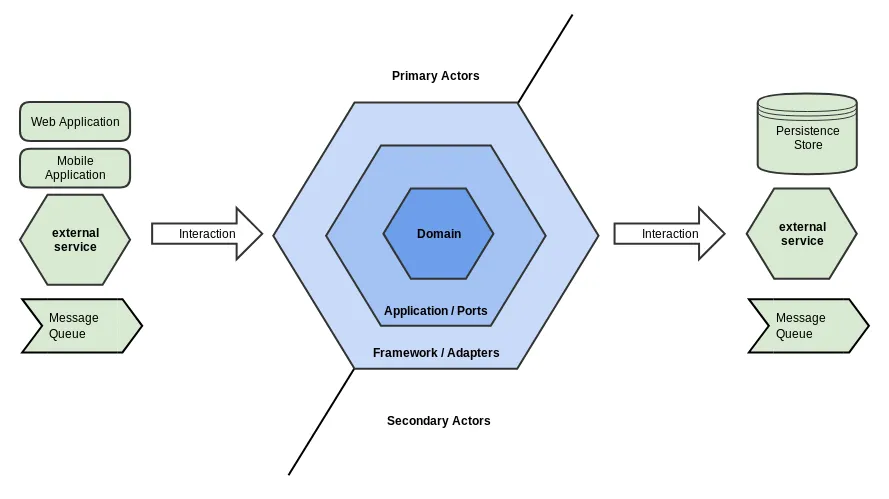

Hexagon architecture, also known as Ports and Adapters architecture, is a design approach that aims to create highly modular and flexible software systems. Think of it as a way to build a software system with a central core and interchangeable components that can be plugged into it, much like connecting different devices to a power outlet with different adapters.

In Hexagon architecture, the core of the system is represented by a hexagon shape, which symbolizes the essential business logic or domain of the application. This core is completely independent of any external dependencies, such as databases, user interfaces, or external services. It focuses solely on encapsulating the key functionalities and rules that make the application unique.

Surrounding the core are the adapter layers, represented by ports and adapters. These adapters act as connectors between the core and the external world. They provide interfaces (ports) that define the capabilities required by the core and implement those interfaces (adapters) to interact with the external systems or technologies.

The ports define the contract or set of operations that the core expects from the external world. They abstract away the details of how these operations are performed. The adapters, on the other hand, implement the ports and handle the technical aspects of communicating with the external systems, such as database access, UI interactions, or integration with third-party services.

The key idea behind Hexagon architecture is that the core remains decoupled from the specific implementations of the adapters. This allows for flexibility and extensibility, as different adapters can be developed or swapped out without affecting the core. For example, you can replace a database adapter with a different one or switch from a web-based UI to a command-line interface, all without modifying the core business logic.

Onion Architecture (2008)

As developers sought to simplify their code structure and establish a clearer dependency flow, they transitioned from Hexagon architecture to the more streamlined Onion architecture. While Hexagon architecture provided flexibility and modularity, Onion architecture offered additional benefits and addressed certain limitations.

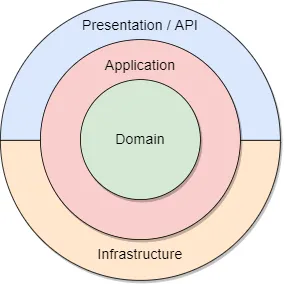

Onion Architecture, introduced by Jeffrey Palermo, is a software design approach that emphasizes the organization of code around the core business logic or domain. Think of it as building layers around the core, much like the layers of an onion, with each layer serving a specific purpose.

In Onion Architecture, the core represents the heart of the application, containing the essential business rules and behavior. The core is surrounded by layers that progressively move outward, with each layer encapsulating a different aspect of the application.

The innermost layer is the Domain layer, which represents the core business entities and their associated behavior. This layer is independent of any external dependencies and focuses solely on implementing the business rules.

Moving outward, the next layer is the Application layer. This layer contains the application-specific logic and orchestrates the interaction between the domain entities. It defines use cases, workflows, and application-specific rules.

The Infrastructure layer comes next, which deals with external concerns such as databases, file systems, network communications, and external services. It provides implementations for data access, logging, email notifications, and other infrastructure-related functionality.

Finally, the outermost layer is the Presentation layer, responsible for user interfaces and interactions. This can include web interfaces, desktop applications, APIs, or any other means of user interaction.

The key principle of Onion Architecture is that the dependencies flow inward, from the outer layers towards the core. This means that the inner layers, such as the Domain layer, remain independent of the outer layers and do not rely on specific technologies or frameworks.

By adhering to this architecture, applications become more modular, maintainable, and testable. The separation of concerns allows developers to focus on specific layers without being tightly coupled to other layers. It also enables the interchangeability of components and external dependencies, making the application adaptable to changes in technology or requirements.

Clean Architecture (2012)

As developers sought a more refined and structured approach to software architecture, they transitioned from Onion Architecture to Clean Architecture. Clean Architecture offered additional benefits and addressed specific concerns that arose from using Onion Architecture.

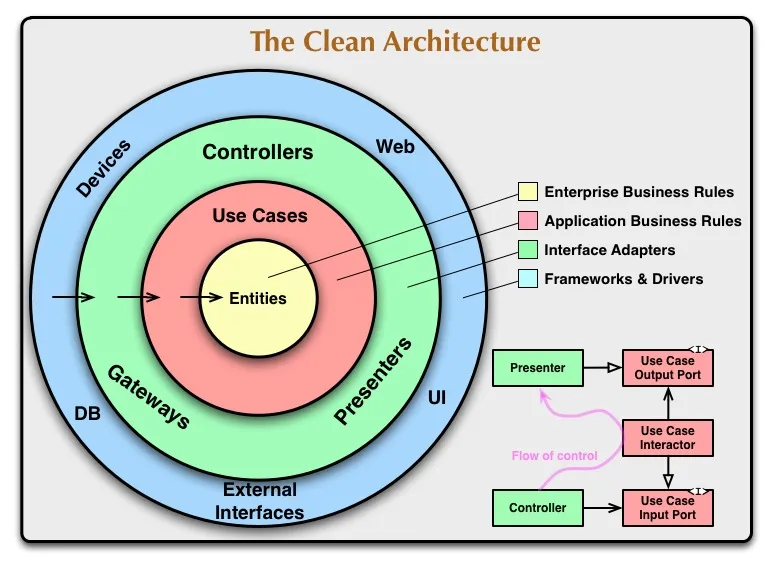

Clean Architecture, proposed by Robert C. Martin, is a software design concept that promotes a clear and modular structure for building applications. It emphasizes separating different parts of the codebase based on their responsibilities and dependencies, making the code more maintainable and testable.

In simple terms, Clean Architecture follows the principle of organizing code in concentric circles, with the innermost circle representing the core business logic and the outer circles representing layers such as interfaces, controllers, and databases.

The key idea behind Clean Architecture is to keep the core business logic independent of external frameworks, databases, or user interfaces. This independence allows the core to be easily modified or replaced without affecting other parts of the application.

By enforcing strict boundaries and dependency rules, Clean Architecture enables developers to write code that is highly decoupled, reusable, and adaptable. It also encourages the use of interfaces and dependency injection to facilitate unit testing and make the codebase more maintainable.

Conclusion

Backend software architecture has evolved significantly to address the escalating complexity of applications. From N-Layered to DDD, Hexagon, Onion, and Clean Architecture, each paradigm offers a unique approach to structuring and organizing backend systems. Understanding these architectural styles empowers developers and architects to make informed decisions when designing and maintaining software applications. By harnessing the benefits of these architectures, teams can build robust, scalable, and maintainable backend systems that align closely with the business domain, accommodating future growth and adaptability.